“Online Hoaxes, Chain Messages & Viral Disinformation” claims cover a wide set of allegations that specific messages, chain letters, or viral items are deliberately false, coordinated, or designed to mislead large audiences. This overview treats those claims as claims — not established fact — and examines documented evidence about origins, mechanisms, and why such materials spread online.

What the claim says

Broadly, the claim asserts that certain widely shared online items—ranging from superstition-style chain messages to political disinformation—are hoaxes or deliberately deceptive campaigns. Variants assert that these items are (a) deliberately manufactured to manipulate opinion, (b) spread by coordinated networks or bots, or (c) inherently viral because of content design (e.g., fear, novelty, or financial incentive). We analyze those different strands as separate, testable statements rather than treating the overall label as a single proven fact.

Where it came from and why it spread

Chain-message behavior predates the internet: printed chain letters and postal schemes circulated in the 19th and 20th centuries, and similar social-practice forms moved online as email, forums, and social media grew. Documented historical examples include early electronic chain letters such as the widely circulated “MAKE.MONEY.FAST” messages in the late 1980s and persistent superstition-style chain mails into the 1990s and 2000s. These historical artifacts show continuity between older chain-letter norms and modern viral messages.

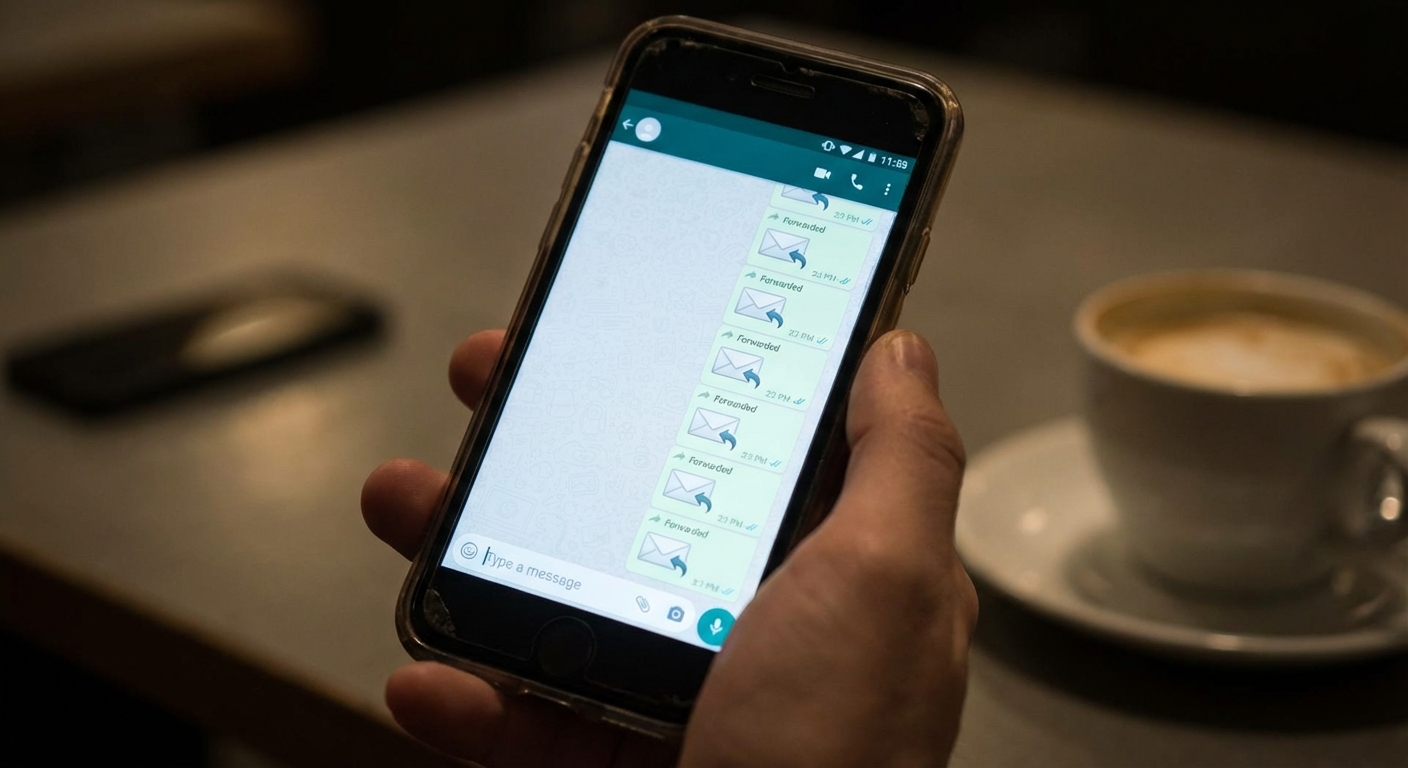

Contemporary viral disinformation draws on platform affordances (easy forwarding, algorithmic amplification, private group messaging) and on human cognitive and social factors. Large-scale empirical work on Twitter finds that false news stories tend to spread farther, faster, and more broadly than truth, and that human sharing behavior — not only automated bots — played a central role in diffusion in the studied period. Researchers linked greater diffusion of falsehoods to novelty and emotive reactions that make people more likely to re-share.

Journalistic and academic reporting also ties the rise of modern viral hoaxes to cultural phenomena such as “copypasta” and “creepypasta” folklore (user-created, copy-and-paste stories) and to platform business models that reward engagement. These sources illustrate how entertainment-style viral content and malicious disinformation sometimes overlap in practice, even if their origins and intents differ.

What is documented vs what is inferred

Documented:

- There is peer-reviewed evidence that false news (as classified by independent fact-checkers) spread farther and faster than true news on Twitter in the 2006–2017 dataset studied by Vosoughi, Roy, and Aral. That study used large-scale data and independent fact-checker labels.

- Historical continuity: chain-letter-style messages existed in print and postal forms before the internet and later adapted into email, forums, and social apps; specific early internet chain letters such as MAKE.MONEY.FAST are documented.

- Surveys and public-opinion research document widespread concern about online misinformation and frequent exposure to misleading content among social media users.

Inferred or plausible but not directly proven in every case:

- Intent: for many viral items, the motivation of originators (malice, financial motive, prank, or sincere error) is not documented and must be inferred from circumstantial evidence such as domain registration, timing, or monetization links.

- Coordination vs organic spread: while some campaigns have documented coordination (e.g., troll farms, astroturf operations described in investigative reporting), many chain messages can spread organically via ordinary users following social norms of forwarding; attributing coordination requires separate evidence such as leaked documents, platform investigations, or forensic analysis.

- Platform algorithmic causation: platforms’ recommendation systems plausibly amplify certain content, but proving a causal pathway for any single viral item requires platform data or audit-level evidence that is rarely public.

Contradicted or mixed evidence:

- Bot primacy: the claim that bots are the main cause of viral hoaxes is not consistently supported. In the major Twitter dataset referenced above, researchers concluded humans played a larger role than bots in spreading false news overall, though bots do amplify content in important episodes. Where sources conflict about bot vs human roles, the correct representation is uncertainty pending platform-level forensic data.

Common misunderstandings

Several frequent misunderstandings recur when people discuss “online hoaxes”:

- Misunderstanding: Every viral hoax is a coordinated disinformation campaign. Reality: Some are deliberate campaigns, but many viral hoaxes are user-driven, folkloric, or commercial rather than centrally coordinated. Evidence for coordination must be shown, not assumed.

- Misunderstanding: Bots are always the driving force. Reality: Bots can amplify but large-scale human sharing, emotional novelty, and network structure often explain rapid spread; different incidents show different mixes of drivers.

- Misunderstanding: If something spreads widely, it must be false. Reality: Virality is content-agnostic; true stories also go viral. The studied datasets show false items spread farther on average, but virality alone is not proof of falsehood.

- Misunderstanding: All chain messages are harmless folklore. Reality: some chain items have led to real-world harm (panic, fraud, harassment), while others are mostly harmless urban legends; impact varies by case and context.

Evidence score (and what it means)

- Evidence score: 48/100

- Score drivers:

- Direct, high-quality documentation exists for several central claims (large-scale Twitter analysis; historical chain-letter records).

- Substantial, reputable survey evidence documents widespread exposure and concern but does not prove causation for specific items.

- Platform-internal data needed to resolve coordination vs organic spread for many incidents is often unavailable, limiting certainty.

- Journalistic case studies and academic work show consistent mechanisms (novelty, emotion, forwarding norms), but heterogeneity across incidents reduces confidence in universal claims.

- Conflicting accounts exist about bots’ roles versus human sharing; authoritative reconciliation requires more transparent platform data.

Evidence score is not probability:

The score reflects how strong the documentation is, not how likely the claim is to be true.

This article is for informational and analytical purposes and does not constitute legal, medical, investment, or purchasing advice.

What we still don’t know

Important open questions remain for many viral items and overall patterns:

- For any specific chain message, who first authored it, and with what explicit intent? Attribution is often missing or contested.

- How much do platform ranking algorithms versus private-group forwarding contribute, in measurable terms, to the virality of a specific hoax? Platforms control critical data but rarely release the granular logs needed to answer this for particular items.

- To what degree are cross-platform flows (e.g., WhatsApp private groups to Twitter to Facebook) necessary for large cascades? Case-by-case forensic tracing is required.

- How effective are different interventions (labeling, reduced reach, digital literacy campaigns) in limiting harm for chain-message style hoaxes? The literature offers candidate interventions but mixed results in field settings.

FAQ

Q: What is meant by “Online Hoaxes, Chain Messages & Viral Disinformation” claims?

A: This phrase groups several related claims: that particular viral messages are false hoaxes, that chain-message social norms cause widespread forwarding, and that some viral items are deliberate disinformation campaigns designed to mislead. Each component is distinct and should be evaluated on its own evidence rather than treated as a single proven fact.

Q: Why do these chain messages spread so quickly?

A: Empirical research highlights several drivers: emotional salience and novelty increase re-sharing rates; social norms (pressure to forward) and private-channel ease (messaging apps) facilitate diffusion; and platform algorithms that prioritize engagement can amplify reach. Different incidents show different mixes of these factors.

Q: Are bots the main reason viral hoaxes spread?

A: Not necessarily. In a major Twitter study, researchers found that humans — not bots — were primarily responsible for spreading false news overall, although bots can and do amplify certain items. The balance between automated and human activity varies by platform, time, and case.

Q: How can I tell if a chain message is a hoax?

A: Check independent fact-checking organizations, look for original sources (news outlets, official statements), and be cautious of messages that demand forwarding, promise extraordinary rewards, or rely on anonymous testimony. If a message claims coordination or intent (e.g., foreign manipulation), look for investigative reporting or platform disclosures that provide evidence rather than relying on viral claims alone.

Q: Do “Online Hoaxes, Chain Messages & Viral Disinformation” claims always mean organized manipulation?

A: No. Many viral messages are best explained as folklore, rumors, or opportunistic clickbait rather than centralized disinformation. Proving organized manipulation requires documentary evidence (leaked directives, forensic account links, or platform transparency reports). Where sources conflict, researchers and journalists note the uncertainty and avoid broad attribution without evidence.

Myths-vs-facts writer who focuses on psychology, cognitive biases, and why stories spread.