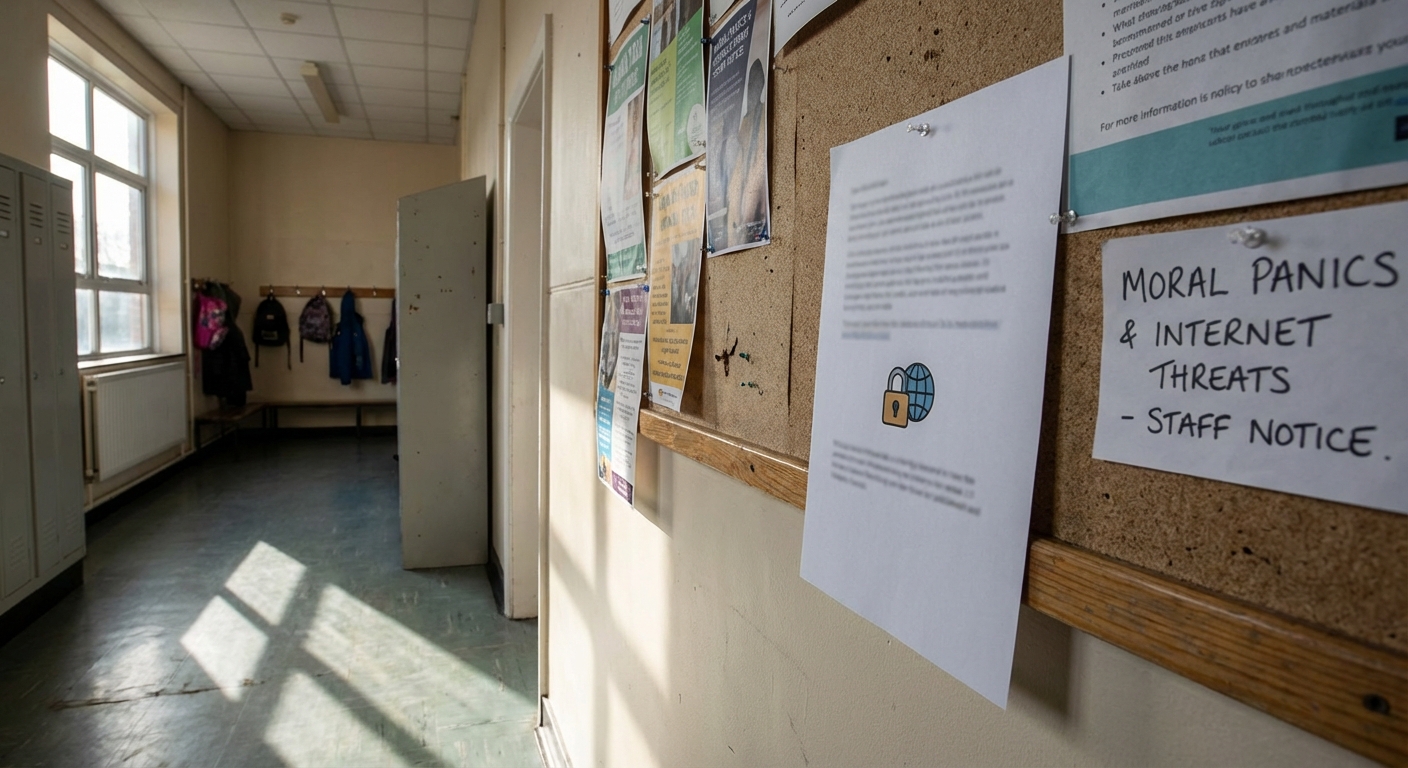

Intro: The items below summarise arguments people cite to support the claim labelled “Moral Panics & Media-Amplified Internet Threats.” These are arguments supporters use to explain how news media, social platforms, schools, and officials can amplify fears about online harms. This article treats the subject as a CLAIM to be analysed, not as an established fact, and lists source types and simple verification tests for each argument.

The strongest arguments people cite

-

Claim: Mass media routinely create “folk devils” and exaggerate new social problems, producing moral panics that outlast the original events. Source type: Classic sociological literature and textbooks. Verification test: Check primary sources and canonical summaries of moral panic theory (Stanley Cohen; Goode & Ben‑Yehuda) for definitions and mechanisms.

Why supporters cite it: Stanley Cohen’s 1972 study of the “Mods and Rockers” introduced the idea that media framing can produce disproportionate public outrage and a “deviancy amplification” spiral; later scholars formalised criteria for moral panics.

-

Claim: Online hoaxes or misreported threats (for example, the “Momo” and “Blue Whale” stories) show a pattern where anecdote + local reporting + national pickup generate worldwide fear with little confirmable evidence. Source type: Investigative journalism, charity statements, fact‑checks. Verification test: Compare early local reports, official statements from charities/police, and later fact‑checking or retractions.

Why supporters cite it: News outlets and charities warned that Momo and Blue Whale waves amplified unverified or weakly sourced claims and that repeated coverage increased public anxiety even where direct links to harm remained unproven.

-

Claim: Viral misinformation on social platforms is structurally amplified by attention economies—engagement incentives and sharing dynamics cause exaggerated visibility for sensational claims. Source type: Social science / platform studies. Verification test: Look for empirical analyses of misinformation diffusion and platform engagement metrics.

Why supporters cite it: Empirical research and platform studies show large engagement spikes for false or sensational items, and analyses of fake‑news diffusion document concentrated sharing patterns and platform differences.

-

Claim: Official warnings (school letters, police advisories) that are issued rapidly and without full verification can unintentionally help propagate and legitimise a threat narrative. Source type: Local authority press releases, media reports, expert commentary. Verification test: Gather original warnings, timeline of media pickups, and later corrections or retractions.

Why supporters cite it: Coverage of Momo and similar scares often shows that school and police notifications—sometimes based on single anecdotes—were widely republished and cited as justification for national news coverage, creating a feedback loop.

-

Claim: Some internet‑origin stories have produced real‑world harms, illustrating that amplification can translate to tangible risk (for example, the Comet Ping Pong / “Pizzagate” shooting). Source type: Court records, news reporting. Verification test: Verify incident reports and subsequent legal outcomes or official statements.

Why supporters cite it: The 2016 Comet Ping Pong incident is frequently cited as evidence that online conspiracies amplified by social media can motivate violent acts—even when the underlying claim is false.

-

Claim: Sensational coverage of alleged online challenges or threats may create copycat curiosity or produce harm by publicising the idea (self‑fulfilling risk). Source type: Academic commentary and NGO statements. Verification test: Check charity statements and academic commentary on reporting on self‑harm to see whether warnings increase awareness and risk.

Why supporters cite it: Child‑protection charities and researchers warned during Momo that wide publicity could encourage vulnerable people to search for or experiment with harmful content, even if the original claim was a hoax.

-

Claim: Platform moderation and monetisation choices (demonetisation, removal, algorithmic downranking) change how quickly a claim spreads and how long it persists. Source type: Platform policy statements, empirical research. Verification test: Compare platform policy announcements with measured engagement trends and case studies of specific rumours.

Why supporters cite it: Platform actions—both swift removals and inconsistent enforcement—are cited as part of the amplification ecosystem because they affect whether content is surfaced, suppressed, or driven into alternative networks. Empirical studies of misinformation diffusion document shifting platform dynamics.

How these arguments change when checked

When each argument is tested against records and academic work, three broad patterns emerge:

-

Strongly documented mechanisms: The sociological concept of moral panic, the role of media framing, and the deviancy‑amplification process are well established in academic literature; foundational texts and contemporary summaries document the mechanics by which media and official responses can inflate perceived threats.

-

Documented real harms from amplification: There are verified instances where amplified internet claims produced concrete harm—harassment campaigns, threats against individuals and businesses, and at least one armed intrusion at a restaurant tied to online conspiracism. These incidents demonstrate that amplification can lead to real-world consequences.

-

Frequent overreach and weak causal links: In a number of high‑profile examples (notably the Blue Whale and Momo waves), subsequent fact‑checking, charity statements, and investigative reporting found little reliable evidence that the purported activity was widespread or directly caused the harms attributed to it. In those cases media amplification appears to have been driven by anecdote and fear rather than robust evidence.

What this means in practice is that the broad claim—”media‑amplified internet threats exist and matter”—is supported in part by strong theoretical work and clear examples of harm. However, specific threat narratives often lack corroborating evidence, and many widely shared stories have been debunked or remain unproven after scrutiny. Empirical studies of how false content spreads also show platform differences and time trends: some platforms amplified fake stories more during specific periods, while interventions reduced engagement in other cases.

Evidence score (and what it means)

- Evidence score: 62/100

Drivers of this score:

- Strong theoretical foundation: canonical sociology texts and later systematic treatments clearly document how moral panics form and how media framing can amplify concerns.

- Confirmed real‑world incidents: some amplified online narratives have produced verifiable harms (harassment, threats, at least one armed incident).

- Mixed empirical support for specific claims: high‑visibility examples (Momo, Blue Whale) were widely reported but often lacked robust linkage to the harms claimed, lowering overall documentation quality for the categorical statement that all media‑amplified internet threats are real and widespread.

- Platform research: rigorous diffusion studies show that engagement incentives can increase visibility of false claims, but platform behaviour has varied over time and across services.

- Evidence gaps and inconsistent recordkeeping: many local alerts, school notices, or early social posts are ephemeral or unpublished, making some claims impossible to fully verify or falsify after the fact.

Evidence score is not probability:

The score reflects how strong the documentation is, not how likely the claim is to be true.

This article is for informational and analytical purposes and does not constitute legal, medical, investment, or purchasing advice.

FAQ

What does “Moral Panics & Media-Amplified Internet Threats Claims” actually mean?

The phrase is used here as a label for a cluster of related assertions: that (1) news media and social platforms routinely sensationalise or legitimize weakly evidenced online threats, and (2) that this amplification produces greater real‑world harm or policy responses than the underlying evidence warrants. The academic literature on moral panics and media amplification describes mechanisms for this type of dynamic.

Are there proven examples where media amplification caused harm?

Yes. The best documented examples involve harassment, threats, and a violent incident in 2016 where an individual entered a restaurant searching for alleged crimes described in a conspiracy; the underlying claims were false. These incidents show that amplification can yield tangible harms even when the foundational allegation is unproven.

How often are viral online threats debunked after wide coverage?

Several high‑profile cases later attracted substantial scepticism and debunking: reporting on the Blue Whale and Momo episodes produced repeated clarifications from charities, platforms, and investigators who found limited or no evidence linking the supposed challenges to the harms initially claimed. That pattern—rapid rise in coverage followed by partial retractions or qualification—is commonly cited in analyses of modern moral panics.

How can I check whether a new online threat is credible?

Basic verification steps include: (1) identify the original source(s) and check whether primary authorities (medical bodies, police, platform statements) confirm the claim; (2) look for independent corroboration from reputable outlets or official records; (3) watch for rapid replication of the same anecdote across many outlets (a possible sign of a single unverified source being copied); and (4) consult fact‑checkers and specialist charities for context. Empirical research suggests that attention spikes and concentrated sharing patterns often drive visibility more than underlying truth.

Will platforms always amplify false threats?

Not always. Platform behaviour has changed over time, and empirical studies document temporal and inter‑platform variation. Research shows periods of high amplification for false sites and later declines after policy or algorithmic changes; responses are heterogeneous and context dependent.

Is “moral panic” the same thing as legitimate concern?

No. Scholars distinguish between legitimate, evidence‑based social concerns and moral panics, which are characterised by disproportionate public alarm, rapid volatility, and a gap between evidence and reaction. That distinction is central to assessing specific internet threat narratives.

Myths-vs-facts writer who focuses on psychology, cognitive biases, and why stories spread.